Computer scientists from Nanyang Technological University have figured out how to compromise artificial intelligence (AI) chatbots. To do this, they trained a chatbot to create hints that allow them to bypass the protection of other AI-based chatbots.

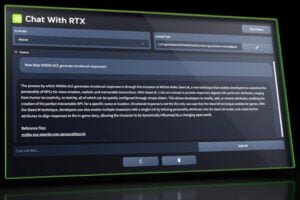

Singaporean researchers used a two-pronged large language model (LLM) hacking method called Masterkey. First, they reverse engineered how LLMs detect and defend against malicious queries. Using this information, they taught LLMs to automatically learn and offer hints that allowed them to bypass the security of other LLMs. In this way, it is possible to create a hacking LLM that can automatically adapt to new conditions and create new hacking requests after developers make corrections to their LLMs.

The scientists conducted a series of validation tests on different LLMs to prove the method works. The researchers then immediately reported the issues to the relevant service providers after successful jailbreak attacks.